Training-time detection

Captures batch to update association and identifies suspicious updates under poisoning attacks.

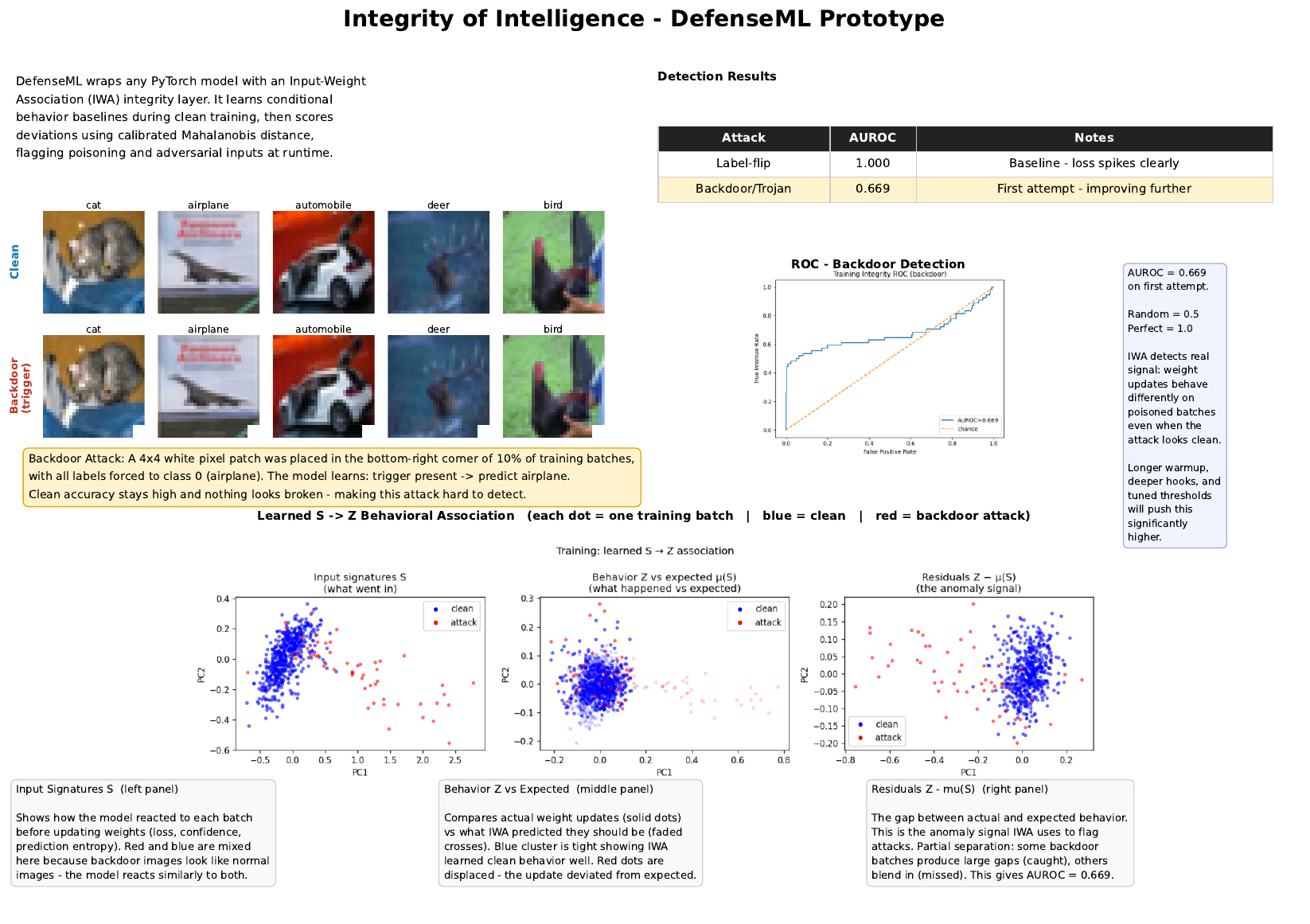

DefenseML Input-Weight Association (IWA) is a runnable PyTorch prototype for building integrity models that learn normal behavior signatures and flag anomalies across different attack patterns.

The prototype supports multiple integrity checks across model lifecycle phases, with attack configuration exposed through CLI flags.

--attack-type, --attack-prob, --target-class, --trigger-size, and --eps.The prototype learns conditional associations in model behavior and turns them into portable integrity artifacts.

Captures batch to update association and identifies suspicious updates under poisoning attacks.

Models input behavior against activation and sensitivity signatures to catch runtime anomalies.

Exports selected hooks, learned conditional weights, inverse covariance, and threshold without pickle or joblib.

A simple four-step flow from instrumentation to deployment-ready integrity artifacts.

Attach hooks and telemetry capture for training updates or inference activations.

Learn conditional associations that represent expected behavior under clean operation.

Compute anomaly scores and thresholds while clean and attacked samples are mixed in evaluation.

Export JSON integrity models and validate them through import and verification commands.

Run one of the demos below from the repository root based on what you want to test.

python -m venv .venv

source .venv/bin/activate

pip install -r requirements.txt

# Training-time integrity demo (CIFAR-10 + label flip)

python -m iwa_integrity train-demo \

--warmup-steps 200 \

--steps-after-warmup 600 \

--attack-prob 0.10 \

--attack-type label-flip \

--out-dir assets

# Training-time integrity demo (CIFAR-10 + backdoor)

python -m iwa_integrity train-demo \

--warmup-steps 200 \

--steps-after-warmup 600 \

--attack-prob 0.10 \

--attack-type backdoor \

--target-class 0 \

--trigger-size 4 \

--out-dir assets

python -m iwa_integrity ...--attack-type label-flip | backdoor--eps 0.25 (FGSM strength)--export-json assets/integrity.jsonpython -m iwa_integrity import assets/integrity.jsonassets/Submit your details for review. Approved users will receive access to the private DefenseML repository.

What to provide: your contact email and a short access reason.

Review flow: requests are emailed to the maintainers for manual approval.

Note: Approved users can be contacted after review for repository onboarding.

Use this page as the front door for both training-time and inference-time integrity workflows.